Google penalties are webmaster’s worst dream. There is more to Google apart from their fantastic algorithms and learning mechanisms. They have an enormous army of quality evaluators which estimate quality and penalize websites.

So when Google’s machine algorithms find out that there’s something “suspicious” in a website, or when people have reported the website to Google a multiple times, it can then be manually reviewed, and relevant actions are likely to be taken.

Users have the power to report websites to Google when they come across pages with spam, paid links or malware in the results.

Even if you don’t face a manual penalty, you still could be affected by an algorithmic filter.

But how will you know if you are hit by an algorithm filter?

With Penguin 4.0 in action, identification of algorithmic penalties might be a little harder than it was in the past.

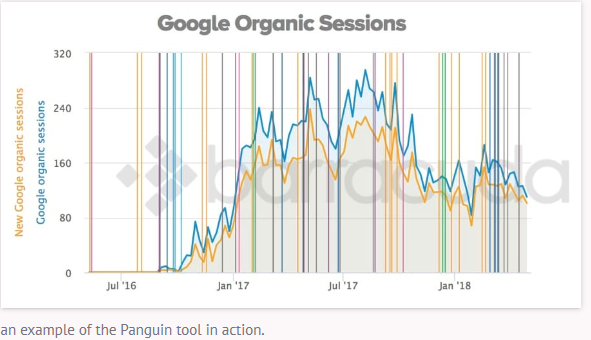

Thus the “Penguin” tool should probably be your first port of call.

This classy tool connects to Google Analytics and overlays known algorithmic updates over a graph of your organic search traffic.

Image Source:- barracuda

Each of the lines depicts a known algorithm update, which makes identification of potential issues hassle-free.

If you are not into Google Analytics, you can still perform a manual check using a combination of the organic traffic graph in Site Explorer and Marie’s list of algorithm updates.

What kind of Penalties can your website get?

Mainly, there are two types of actions that could be displayed on the Manual Actions page:

- Sitewide matches, that that affect an entire website;

- Partial matches, applicable to an individual URL or a section of a website.

Each Manual Action notification is accompanied by a “Reason” and “Effects” information along with it.

The list of common manual actions includes:

- Hacked site

- User‐generated spam

- Spammy free hosts

- Spammy structured markup

- Unnatural links to your site

- Thin content with little or no added value

- Cloaking and/or sneaky redirects

- Unnatural links from your site

- Pure spam

Here are 5 important Penalties you need to be aware of and their fixes

Cloaking/Sneaky Redirects

It is the act of showing different pages to users that are shown to Google.

Sneaky redirects send users to a separate page than shown to Google. Both actions violate webmaster guidelines.

Fix:

- Try navigating to Google Search Console > Crawl > Fetch as Google, then fetch pages from the affected portions of your website.

- Compare the content on your web page to the content fetched by Google.

- Resolve any variations between the two so they end up being the same.

First Click Free Violation

A website is not in accordance with the policy if it requires users to register, subscribe, or log in to see the full content.

This penalty is levied against websites that show their full content to Google but restrict the content viewable to users, especially when it comes to users from Google’s services in accordance with Google’s First Click Free Policy.

Image Source:- marketingland

Fix:

-The content that the user see must be from Google’s services and should be the same as that shown to Google. Make any edits required to come into compliance.

-Submit a reconsideration request after fixing the issue.

Cloaked Images

Images which are

- Are obscured by another image

- Are different from the image server

- Redirects users away from the image

All of the above are considered as cloaking.

Fix:

- Showing the exact same image to Google as the users of your site.

- Submitting a reconsideration request after fixing the issue.

Hacked Site

Hackers are constantly looking for ways to exploit WordPress and other Management Systems to inject malicious content and links.

Fix:

- Contact your Web host and build a support team.

- Quarantine your site regularly.

- Asses the damage caused by the spam/malware

- Identify the vulnerability to find out how the hacker barged in.

- Request a review and ask Google to reconsider your hacked labeling.

Hidden Text/Keyword Stuffing

This penalty is levied when Google finds out that your site is guilty of using hidden texts or keyword stuffing.

Fix:

- Navigate to Google Search Console > Crawl > Fetch as Google then fetch pages from the affected portions of your website.

- Search for text that is the same or familiar in color to the body of the web page.

- Search for hidden text with the use of CSS styling or positioning.

- Remove or restyle any hidden text so that it’s obvious to a human user.

- Fix or remove any paragraphs of repeated words without context.

- Fix <title> tags and alt text containing strings of repeated words.

- Remove any other instances of keyword stuffing.

Submit a reconsideration request after fixing these issues.